Page Crawler

You can use HARPA AI as a page crawlers using one of the two approaches:

- Page fetching - This approach is suitable for websites which are rendered "server-side", and doesn't require navigating to links to gather information. However, it may not work on JS-heavy client-side rendered websites and is not applicable to social networks and other platforms that restrict fetching capabilities.

- Navigate & Extract data - This approach is versatile and allows you to extract data from any resource, such as processing a list of links sequentially through LOOPs.

In this guide, we will discuss the first approach. You can find the description of the NAVIGATE step in the AI Commands section.

Test and choose the approach that best suits your specific task.

# TL;DR: Page fetching

The {{page}} parameter supports query, url and limit arguments. For example, {{page Connect HARPA to OpenRouter https://harpa.ai limit=10%}} will search for relevant information on Connect HARPA to OpenRouter query withing https://harpa.ai website, limiting output to 10% of the LLM token context window.

Remember that the token limit includes both input and output. Therefore, try not to exceed a reasonable number of tokens in your input, for instance, 40%, to leave space for a prompt and a high-quality AI response.

{{page query url limit=x%}} parameter options are:

- {{page url}} - will retrieve the web page content from the specified URL.

- {{page query}} - will scan the page content for text semantically similar to the given query and return that as a parameter value.

- {{page limit=500}} or {{page limit=10%}} - specify a limit in tokens or percentage of the model context window, e.g. 10%.

# Custom command example

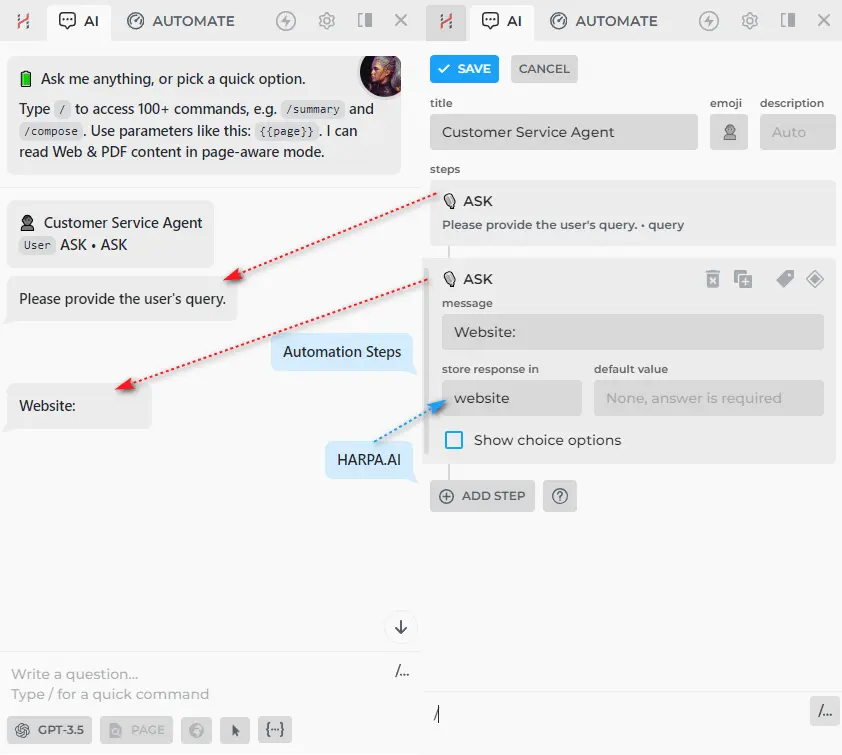

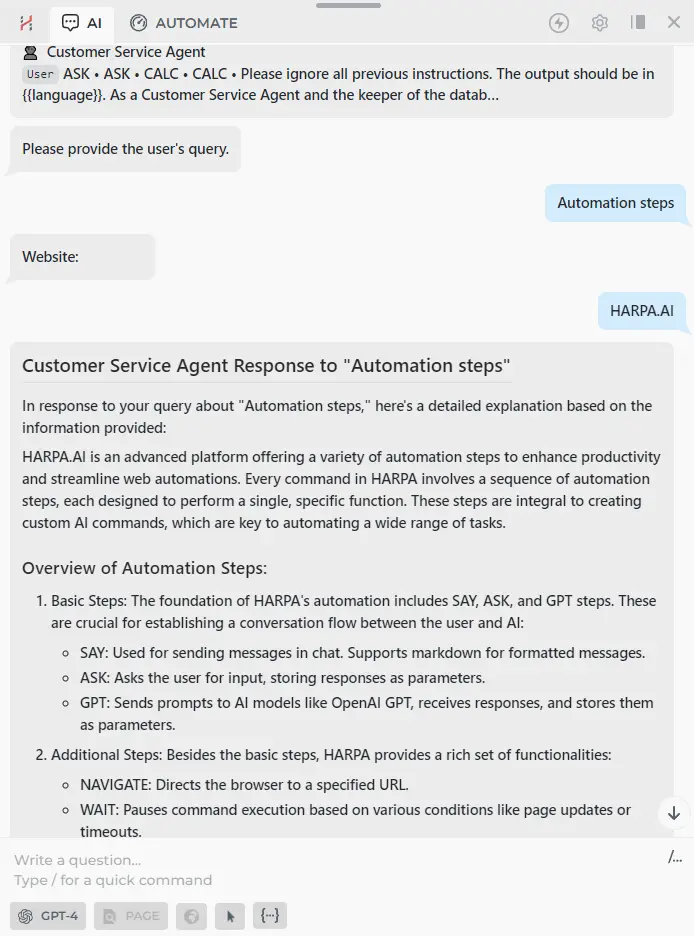

You can create your own Customer Service Agent that searches for information on a specific website and responds to user queries.

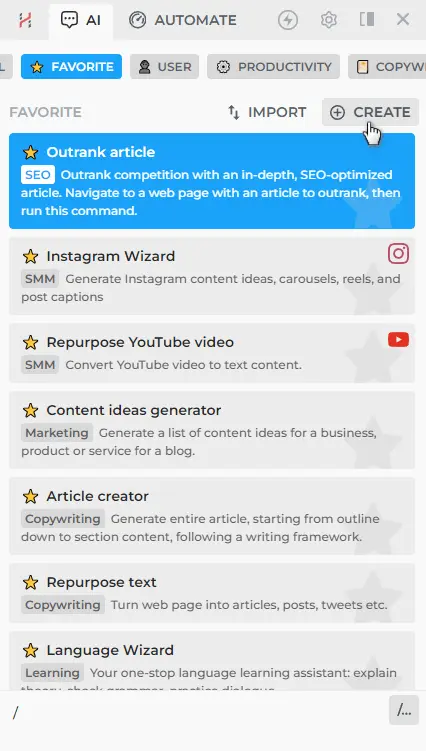

- Open HARPA AI and type / in the chat.

- Click on the CREATE button in the top right corner.

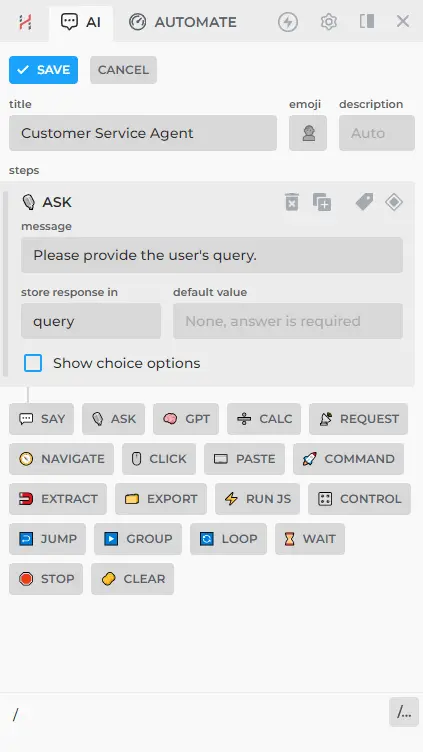

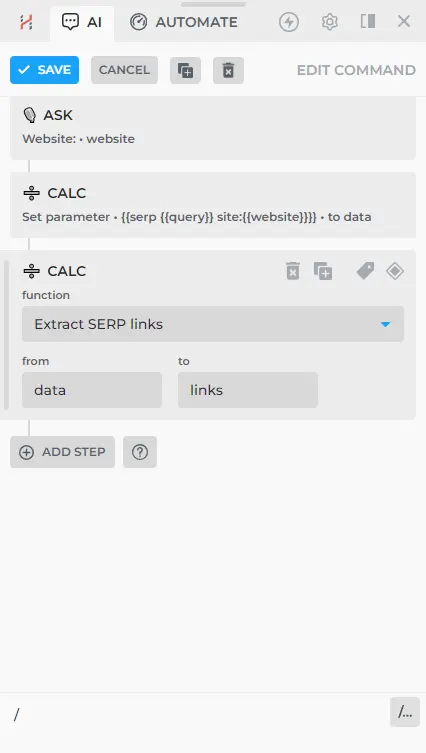

- Add the ASK step that will request the user’s query to save it in a {{query}} parameter.

- Add the ASK step that will request the {{website}}.

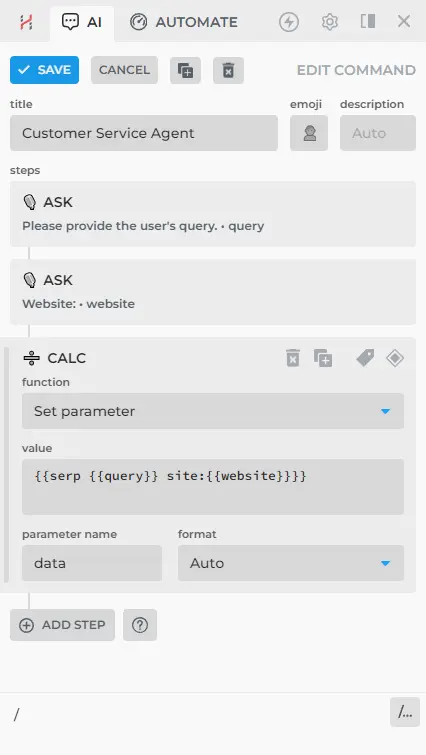

- Use the CALC: SET PARAMETER step to create a new parameter with information from {{serp {{query}} site:{{website}}}}, named {{data}}.

- Add the CALC: EXTRACT SERP LINKS step. This step will take the necessary information from the {{data}} parameter and form an array of data containing titles, URLs, and descriptions in an array called {{links}}.

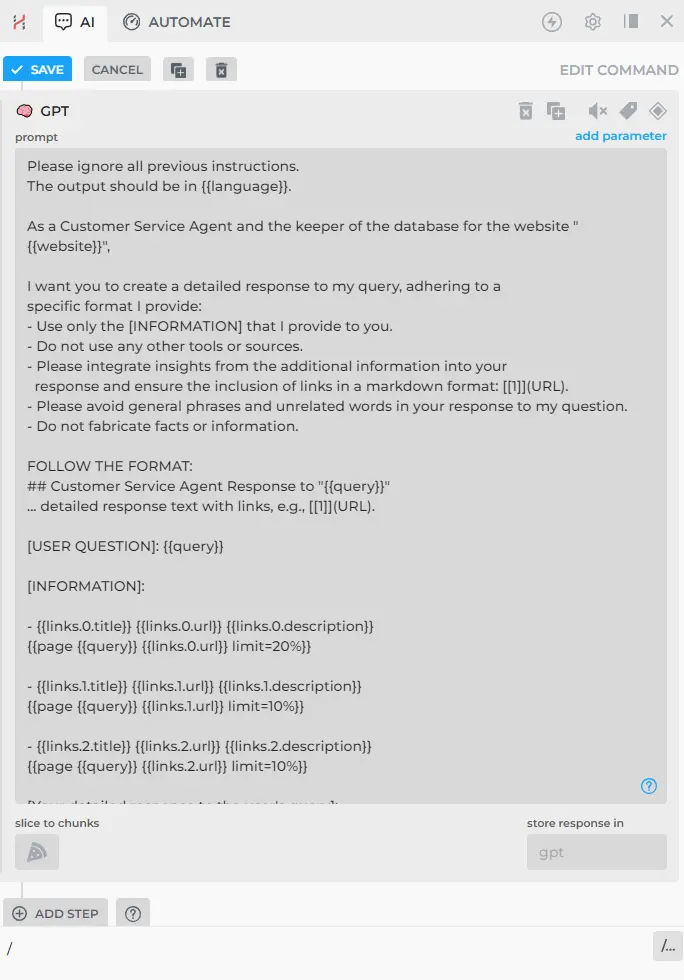

- Add the GPT step, in which you ask ChatGPT to respond to the user's question using information from the specified website via the {{page query url limit=x%}} parameter. The prompt might look like this:

Please ignore all previous instructions.

The output should be in {{language}}.

As a Customer Service Agent and the keeper of the database for the website "{{website}}",

I want you to create a detailed response to my query, adhering to a

specific format I provide:

- Use only the [INFORMATION] that I provide to you.

- Do not use any other tools or sources.

- Please integrate insights from the additional information into your

response and ensure the inclusion of links in a markdown format: [[1]](URL).

- Please avoid general phrases and unrelated words in your response to my question.

- Do not fabricate facts or information.

FOLLOW THE FORMAT:

## Customer Service Agent Response to "{{query}}"

... detailed response text with links, e.g., [[1]](URL).

[USER QUESTION]: {{query}}

[INFORMATION]:

- {{links.0.title}} {{links.0.url}} {{links.0.description}}

{{page {{query}} {{links.0.url}} limit=20%}}

- {{links.1.title}} {{links.1.url}} {{links.1.description}}

{{page {{query}} {{links.1.url}} limit=10%}}

- {{links.2.title}} {{links.2.url}} {{links.2.description}}

{{page {{query}} {{links.2.url}} limit=10%}}

[Your detailed response to the user's query]:

As a result, ChatGPT will receive information from the top 3 suitable pages: URL, Title, and description from the SERP, plus relevant data fetched directly from the website.

By the way, such a command already exists in HARPA AI, and you can use it if you have any questions. Just type /help and select a command from the list.

# Links for further reading

All rights reserved © HARPA AI TECHNOLOGIES LLC, 2021 — 2026

Designed and engineered in Finland 🇫🇮