Prompt Flags

# Connection Model Flags

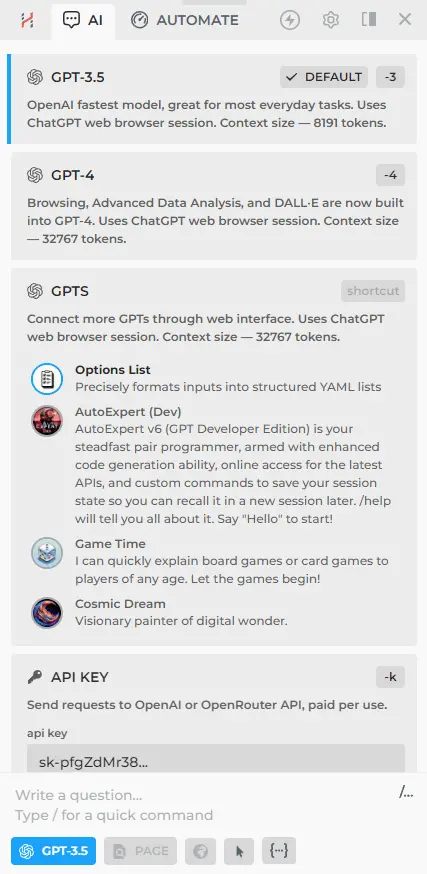

In the connections menu, you'll find five connection flags (-3, -4, -c, -b, -k). These flags can be used in chat or during the GPT step of a custom command, allowing the choice between Claude and ChatGPT for executing specific steps.

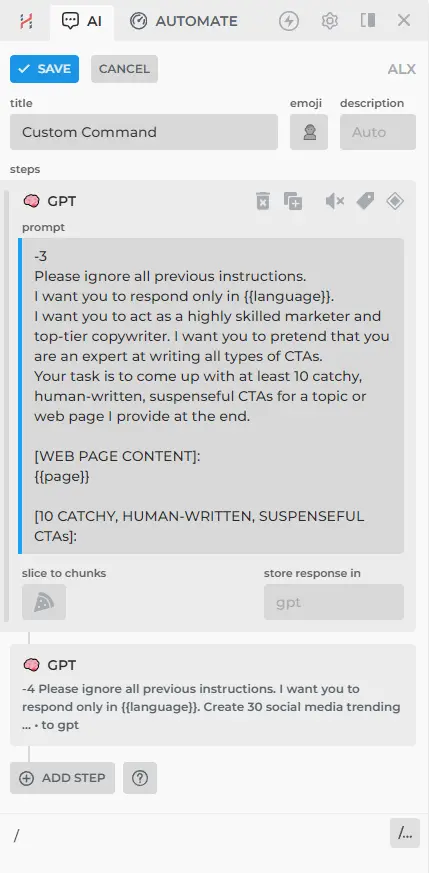

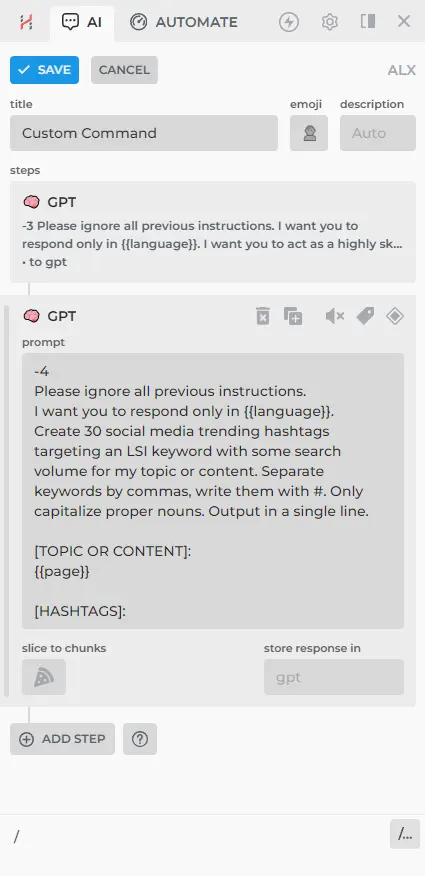

You can use flags, such as "-4", "-3", and "-k", to switch between models within a single custom command. For example:

- Adding the "-3" flag to the first GPT step will generate a response using an efficient model.

- Adding the "-4" flag to the second GPT step will generate a response using a premium model.

This allows for convenient switching between faster efficient models and the slower, but more advanced, premium models.

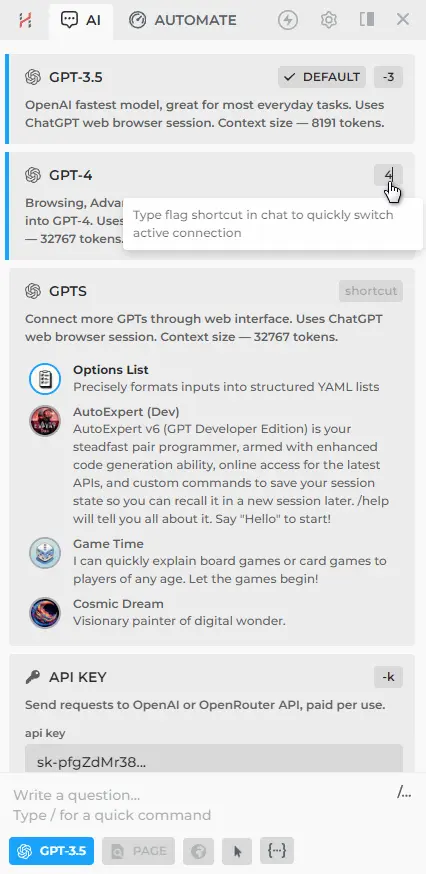

If these flags seem inconvenient, you can change them:

- Go to the connections menu.

- Select the desired connection and click on the flag icon in the top right corner.

- Type a new flag name that you find easier to remember or use.

# Page-aware mode flag

Page-aware (-p) flag will send opened page content along with your prompt.

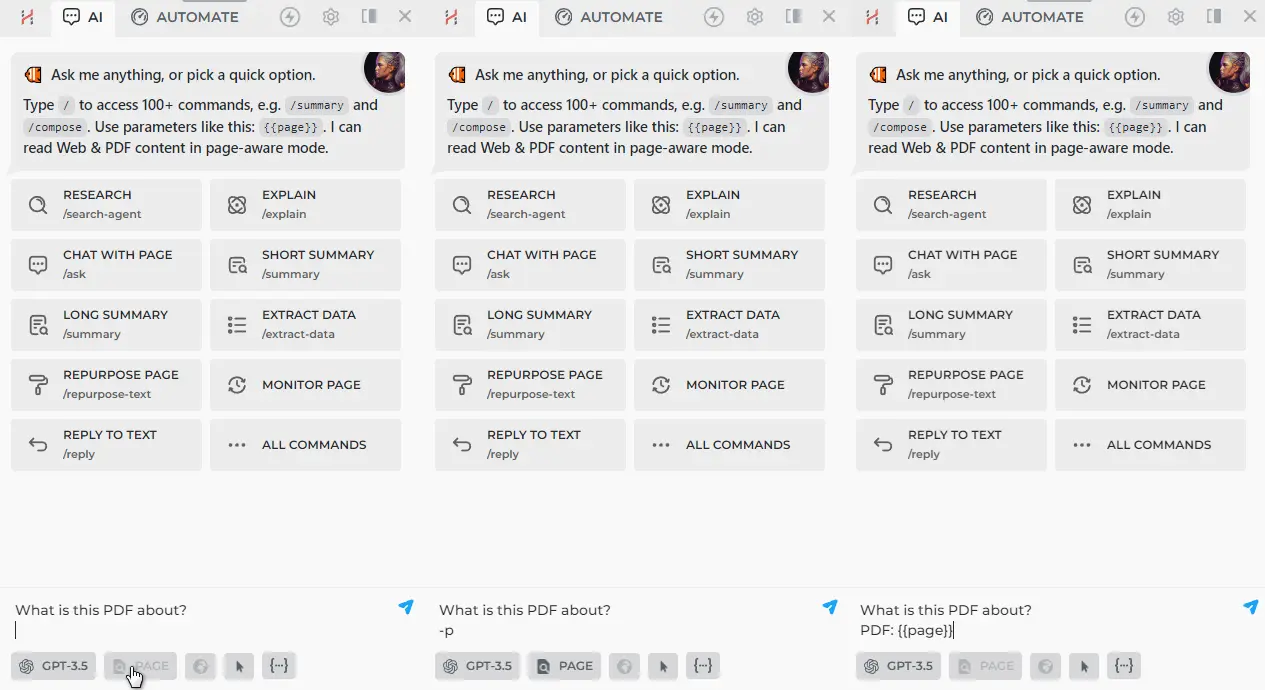

Pressing [🔍 PAGE] button, asking "-p What is this PDF about?", and "What is this PDF about? PDF: {{page}}" all do effectively the same thing, and give the same input to the LLM.

# Web-aware mode flag

Web-aware (-w) flag will search the web for the given prompt, and send search results along with your prompt.

This is useful for getting information from the web on the recent events.

# Isolated mode flag

Using -i flag on API or CloudGPT connection will force AI to ignore the chat history, sending prompt in isolation.

Isolated flag is used to reduce token consumption, especially on multi-chunk GPT steps. Responses of the isolated requests will still appear in the chat history, and can be used or referenced with {{gpt}} parameter.

# Combining Flags

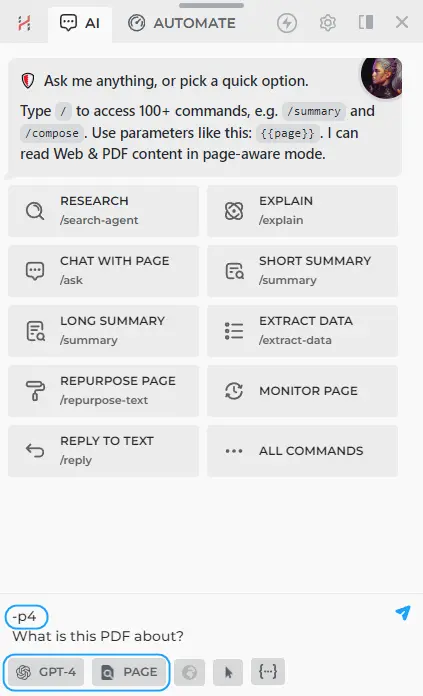

To combine two flags, write them in sequence after a single "-". For instance, to combine the page-aware and premium model flags, enter '-p4' in chat.

# Links for further reading

All rights reserved © HARPA AI TECHNOLOGIES LLC, 2021 — 2026

Designed and engineered in Finland 🇫🇮